If you look at the stock market, you’ll notice incredibly high valuations for companies connected to artificial intelligence. Is this a bubble or a fair price? Many draw parallels with the dot-com bubble. But MIT economist Ricardo Caballero says it might be both at the same time. Let’s figure out how that’s even possible.

Marginal Returns

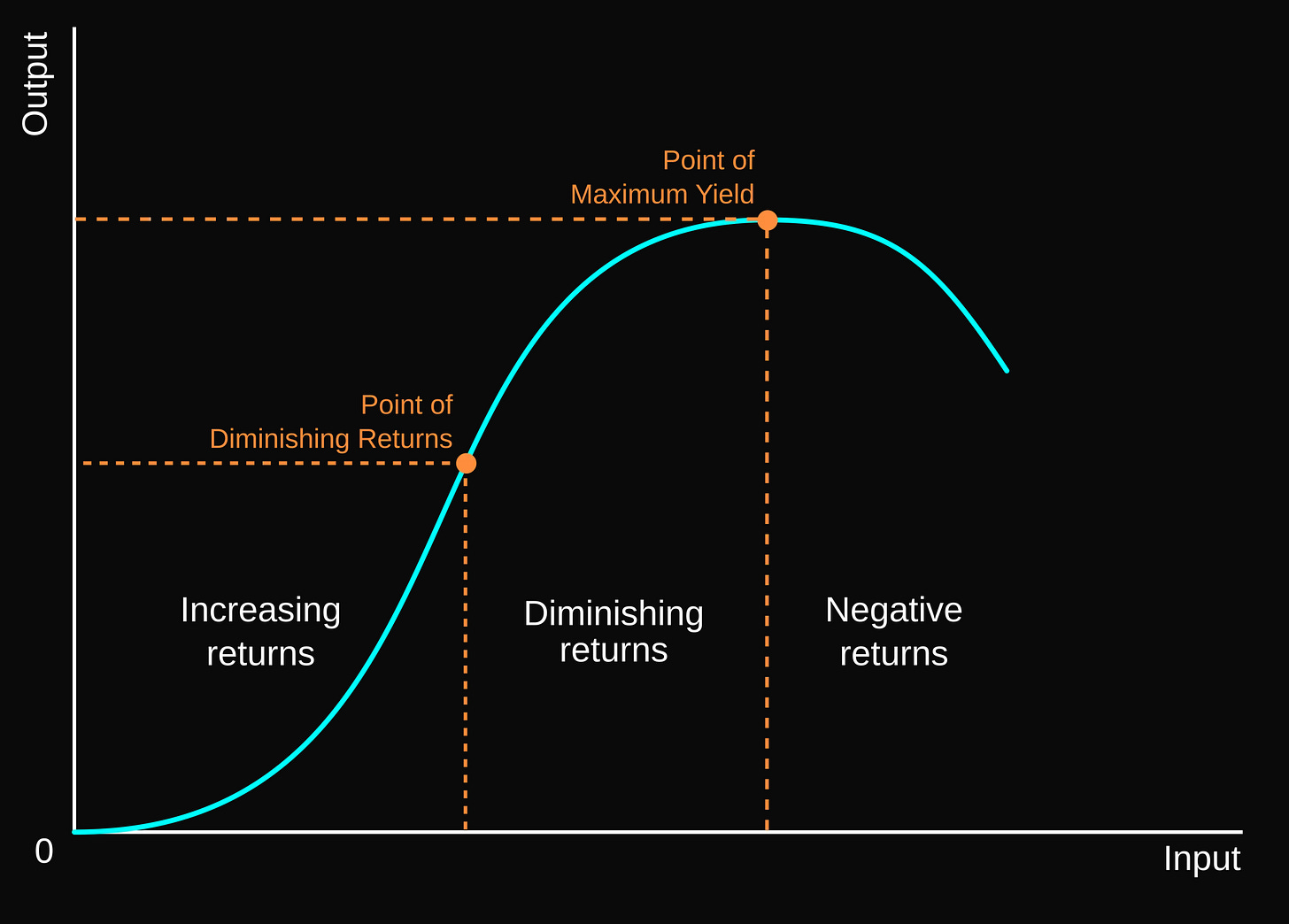

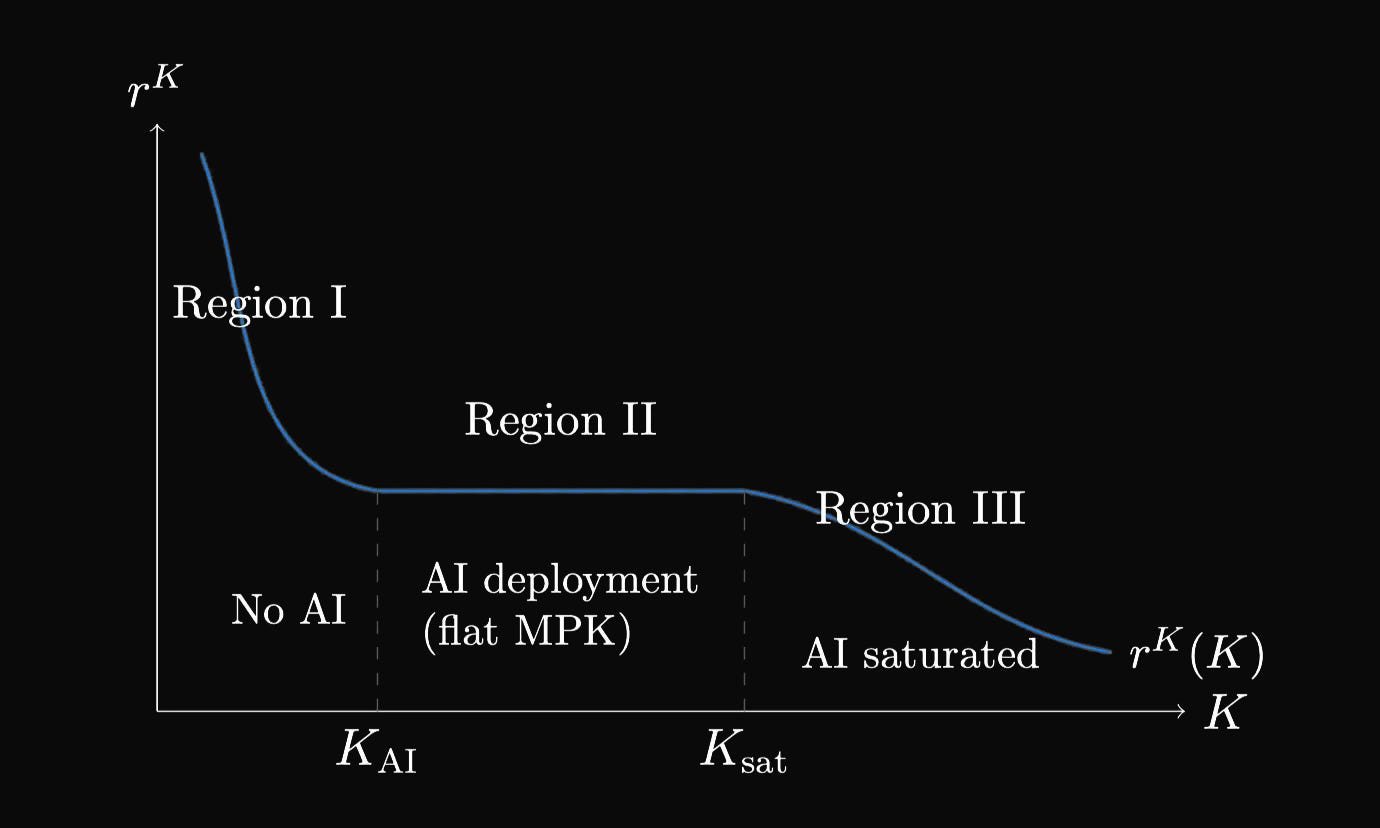

In a normal economy there’s a simple rule: the more capital you accumulate, the smaller the return from each additional dollar invested. This is called diminishing marginal returns.

Take a hypothetical company building a mobile app. The law of diminishing marginal returns explains what happens when you keep adding developers to a project with a fixed architecture and codebase.

The increasing returns phase starts with a single developer who writes the backend, builds the interface, handles testing, and talks to the client. When the company hires a second developer, specialization emerges: one focuses on the backend, the other on the frontend. They work in parallel on different modules, code review improves, bugs get caught faster. Productivity grows faster than simple doubling.

When a third developer is hired, say a UI/UX specialist or QA engineer, the team reaches peak synergy. The optimal team size for a mid-complexity project is 3-7 people. A study of 491 projects showed that teams of 3-7 developers demonstrate the best productivity and code quality.

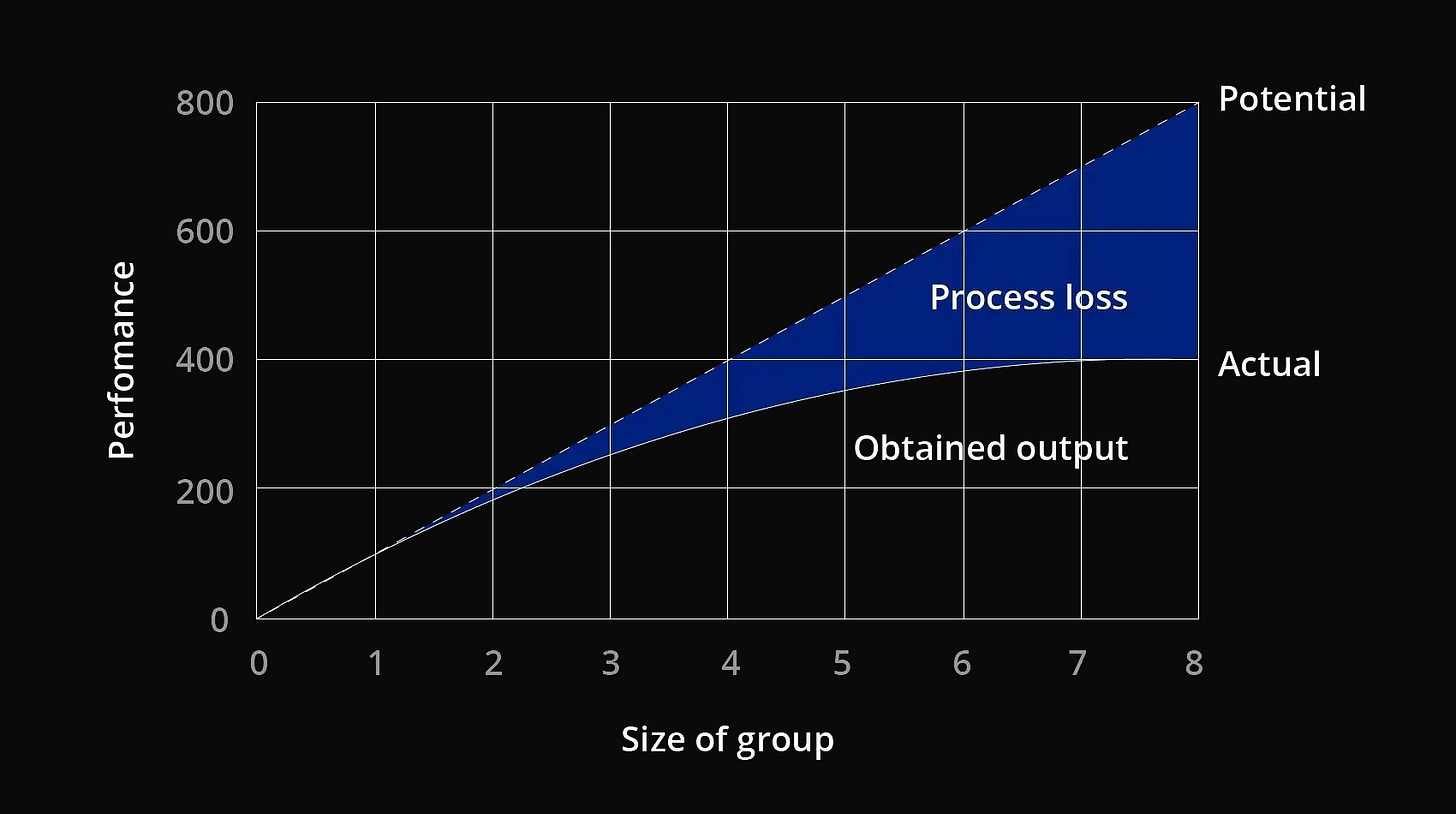

This is also confirmed by the Ringelmann effect. A company can achieve far more with more team members, but individual members of a large team are less productive than their counterparts in smaller ones. According to this theory, the individual effort of each group member declines as group size grows.

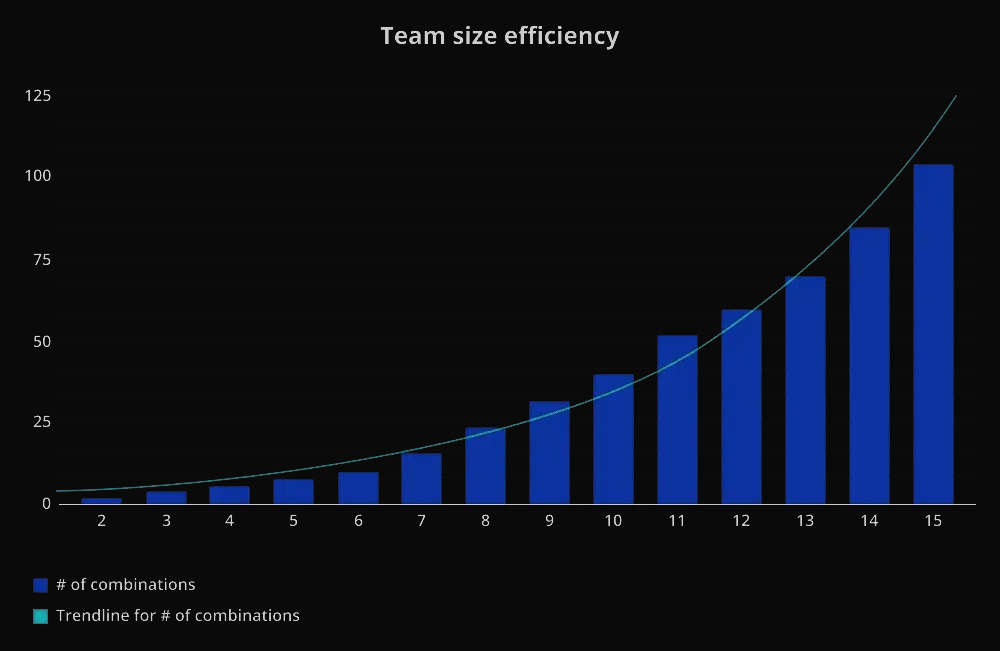

The diminishing returns phase begins around the 4th or 5th developer. Features are still being added, but not as fast as before. The architecture is already set, the critical modules are occupied, developers spend more and more time on calls, aligning interfaces, waiting for dependent tasks to complete. The number of communication channels grows by the formula N(N-1)/2. For 5 people that’s 10 channels, but for 8 people it’s already 28. The chart below shows exactly what the exponential growth in communication channels looks like as team size increases.

Each new developer still adds value, but their contribution is smaller than the previous one’s, since more time goes to communication and coordination than to actual code.

Negative returns can set in when the team grows to 10-15 developers without corresponding changes to architecture and processes. The project becomes a coordination nightmare. The point of maximum output is passed and productivity falls, because the team spends more time on synchronization and resolving merge conflicts than on new work.

Each new developer isn’t just useless but actively adds communication overhead for everyone else, slowing the project down. This phenomenon was described by Fred Brooks back in 1975 in “The Mythical Man-Month”:

“Adding manpower to a late software project makes it later.”

This whole construction worked perfectly well until a new factor appeared: AI. It’s not just equipment that helps workers; it can itself perform some tasks that previously only people could do. If you add AI capital into this chain, you simultaneously add “effective labor.”

But how is AI different from the first machines and steam engines? Those also sharply increased labor productivity. Maybe this is just a new industrialization? That comparison doesn’t quite hold.

The first wave of industrialization (steam engines, looms, assembly lines, etc.) did make individual workers far more productive. One person with a machine produced as much as five without one. In the terminology, this is also growth in “effective labor,” since output per worker grows. But there’s one important detail: the number of people barely changed, while capital kept increasing. The “capital per worker” ratio grew, and the marginal return from each new machine eventually began to fall. The first machine produces a huge gain, but the hundredth will make a barely noticeable difference. And we’re back to classical diminishing returns.

With AI the logic is different: it represents a special type of capital that behaves not merely as a labor amplifier but as labor itself. This means that each additional piece of AI capital adds not just capacity but new virtual workers. You didn’t just buy a server; you bought a server plus ten digital employees who themselves read, think, write, and review. As more such AI agents are added, both capital and the effective number of workers grow.

Effective Labor

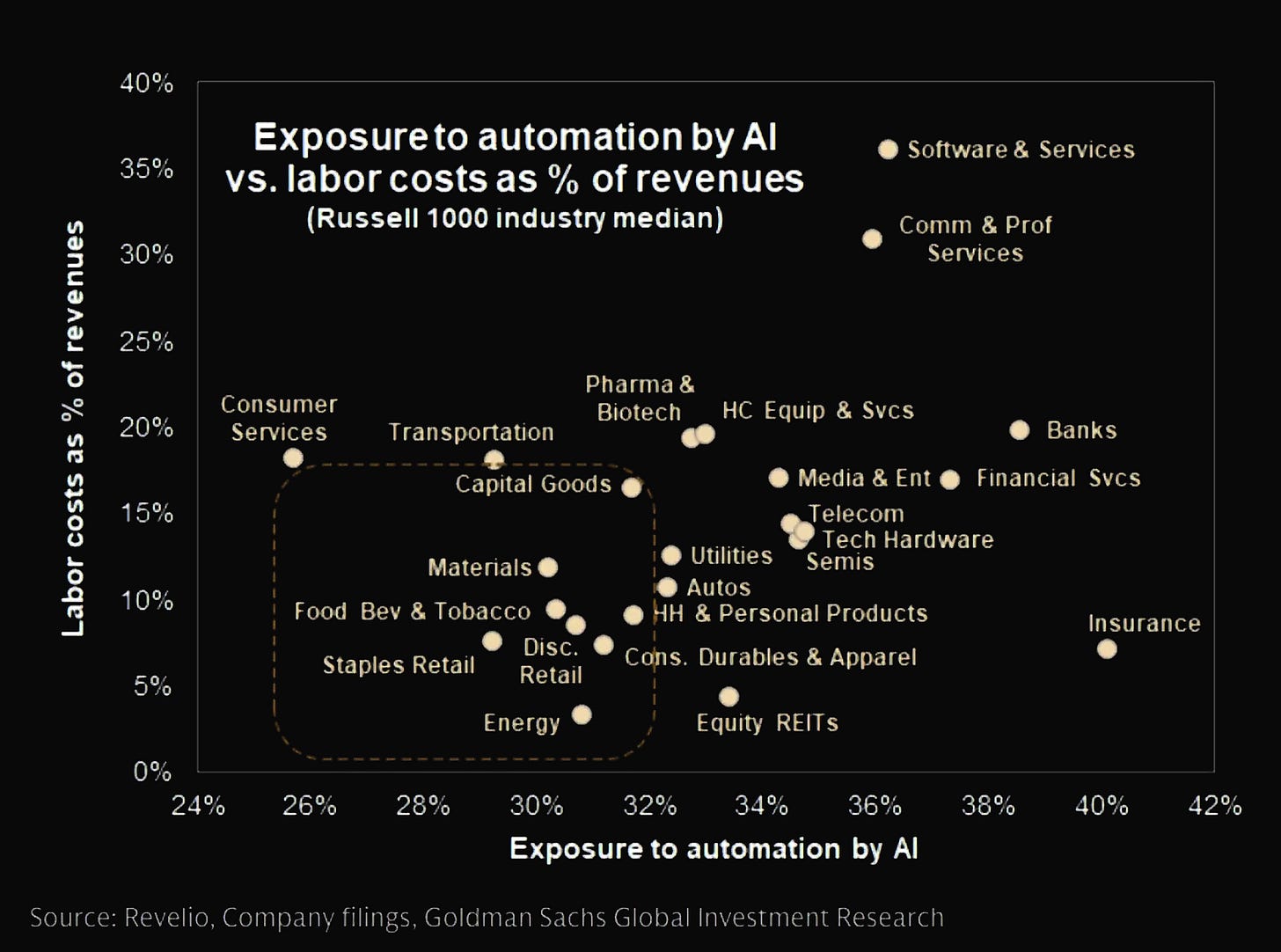

The Goldman Sachs chart showing how exposed different sectors of the economy are to AI automation, plotted against their labor costs as a share of revenue, shows exactly where the risk of human labor displacement is concentrated.

Now imagine that a company bought not just a GPU cluster but a cluster plus thousands of virtual programmers, data analysts, and copywriters who work 24/7. As a result, the ratio of IT capital to effective labor stays roughly constant, and the return on infrastructure doesn’t fall, it holds at a stable level. There’s an entire range of compute expansion where marginal returns stay flat rather than declining. That’s the first key point.

Now add the second mechanism: wealth distribution in the IT industry. AI shifts income from engineers and programmers to technology company owners. Why? Because AI replaces some high-paid specialists and the share of wages in revenue falls. The shareholders’ share of profit grows in turn.

When wealth concentrates among venture capitalists and founders of tech giants, their savings rate increases. A Silicon Valley billionaire doesn’t spend all their capital on consumption; they reinvest an enormous share back into startups and infrastructure. This is confirmed in the joint research by Piketty and Zucman based on data for wealthy countries, showing that the ratio of total wealth to income grew over recent decades (from 200-300% in 1970 to 400-600% by 2010).

Another article by Zucman on increasing global wealth inequality shows that the share of wealth belonging to the top 1% of the population has grown noticeably since the 1980s, while the share held by the bottom 75% has remained low. Wealth is becoming more concentrated, especially among those who already hold substantial financial resources, meaning the potential sources of large-scale ecosystem investment.

This means that in the IT ecosystem, the aggregate supply of capital available for investment is growing, and the required return on that capital falls. In other words, as tech capitalists get richer, they’re willing to fund projects at lower rates of return, simply because they have more money to reinvest into the ecosystem. This is called the “capital feedback cycle,” where capital works as a closed cycle. That’s why we see exponential growth in CapEx.

Three Equilibria

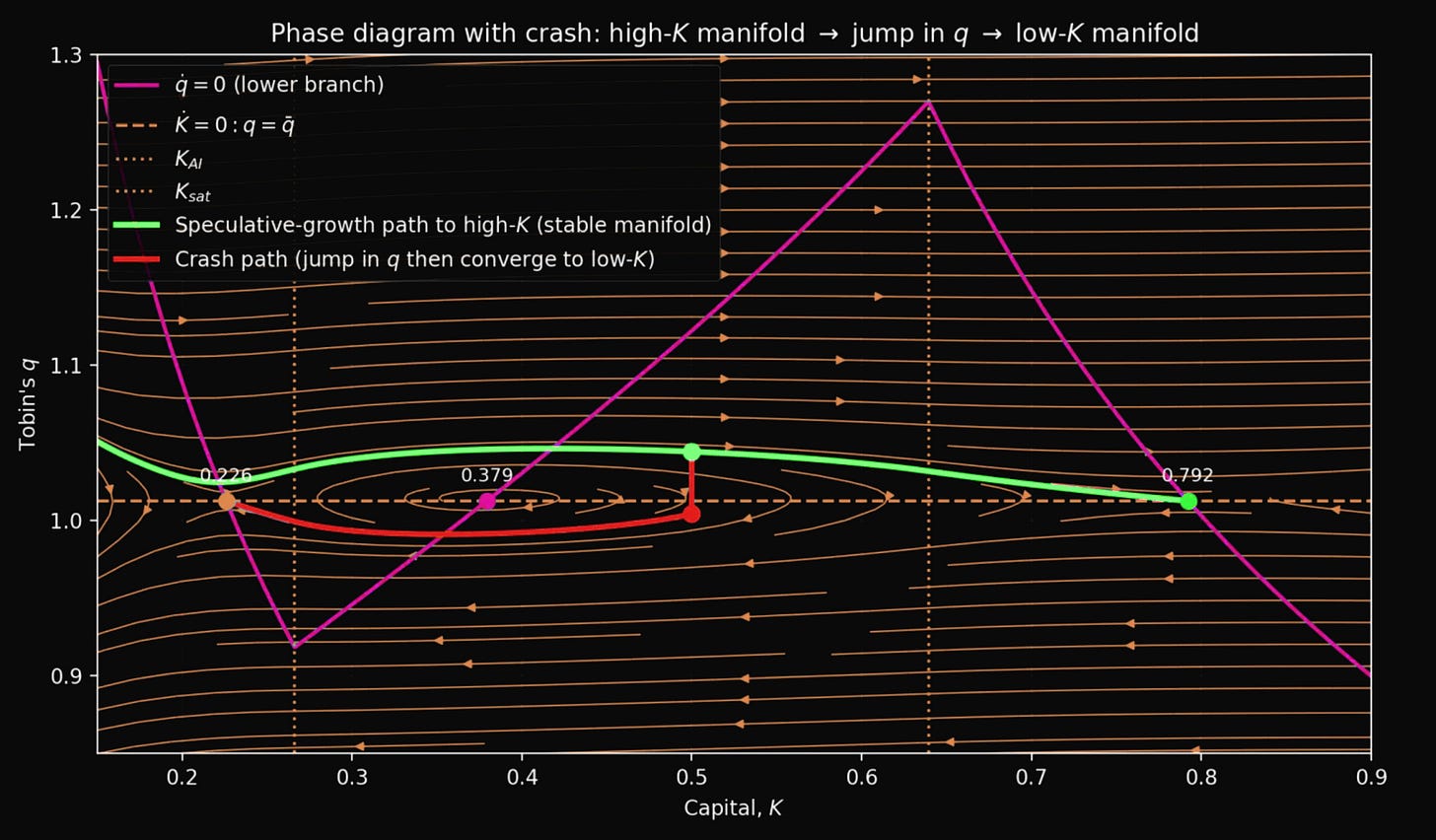

Now let’s combine these two effects. On one side, we have a flat section of IT capital returns thanks to AI. On the other, as infrastructure grows and tech billionaires get richer, the required return on that capital falls. What this means mathematically is that instead of a single stable state (where capital growth leads to falling productivity), the IT ecosystem can have three stable states: three equilibria.

Think of the IT industry as a system that can stabilize at three different levels of development. These levels are defined by two variables:

K is capital, meaning the volume of IT infrastructure (data centers, GPU clusters, trained models). K_ai is the threshold for the start of AI deployment, and K_sat is the AI saturation threshold.

q is Tobin’s q, showing how expensively the market values that infrastructure relative to its actual replacement cost. That is, if a company had to build all its data centers, buildings, servers, networks, etc. from scratch today, it would cost roughly $n billion. That’s the replacement cost of capital. For example, if a company’s market value is $15 billion and its replacement cost is $10 billion, then q = 1.5.

Each equilibrium is defined by points on the chart.

The first equilibrium, called “low” (K approximately 0.226), sits to the left of the K_AI threshold, before mass AI deployment. At this stage AI exists but is used in a limited way, mainly for experimental projects rather than as a mass tool for replacing human labor. Returns on capital are high because infrastructure is scarce. But the required return for investors is also high, which means wealth is limited, the consumption rate is high, and investors demand a large return on their investments. These two forces balance each other out. Tobin’s q sits at a fair level of around 1.02 and the market values companies at their real asset value.

This state resembles the IT industry in early 2022, before the ChatGPT revolution. Data centers are operating, cloud services are running, but AI hasn’t yet become a mass-market tool. Companies invest cautiously because they don’t see revolutionary opportunities for rapid growth. The ecosystem gets stuck at this point because nobody believes a transition to a higher state is possible, so there’s no incentive for massive investment.

The second equilibrium, called “middle” (K approximately 0.379), sits between the K_ai and K_sat thresholds, meaning inside the AI deployment zone. This is a theoretical point where infrastructure is at a medium level, AI is partially deployed, returns on capital are on a plateau thanks to the labor substitution effect, and required returns are at a medium level. Mathematically this equilibrium exists, but it’s fundamentally unstable, because in the AI deployment zone (Region II) the return on capital (r^K) is flat, since it doesn’t fall as infrastructure grows.

This means there’s no natural brake on growth. The return that investors require keeps declining as their wealth grows, which creates a positive feedback loop in both directions.

If capital grows a little, wealth increases, required returns fall (investors become less demanding and are willing to accept smaller yields), investing becomes more profitable since meeting lower hurdles is easier. So capital grows even faster and the system moves toward high equilibrium.

If the reverse happens and capital falls a little, wealth decreases, required returns rise, investing becomes unprofitable, capital falls further, and the system slides back toward low equilibrium.

The IT ecosystem with the AI factor won’t linger at the second equilibrium. It will either break through it in a surge of growth on the way to high equilibrium, or roll back to the low one (along the red line). This is a little like balancing on a tightrope: you can be there for a moment but not indefinitely. In practice, the industry can cross this point while riding a wave of optimism following an AI breakthrough and keep moving forward, as long as confidence doesn’t crack.

The third equilibrium, called “high” (K approximately 0.792), sits to the right of the K_sat threshold, meaning after full AI saturation. Imagine that the industry is saturated with IT infrastructure: data centers are built at scale, GPU clusters are deployed everywhere, LLMs are trained to the limits of current technology, and all tasks that can be automated with today’s AI already have been. AI capital has reached its peak investment and is no longer growing, either because of technological constraints (models have hit a quality ceiling), or because training data is exhausted, or because of organizational and regulatory barriers. Returns on capital are again low because there’s an abundance of infrastructure and the classical laws of diminishing returns apply. But the required return for investors is even lower, since the ecosystem’s wealth is enormous and tech billionaires are willing to invest at minimal rates because there are very few alternatives for deploying capital. These forces again balance each other out, but at a new, higher level. Tobin’s q returns to a fair level of around 1.02, the same as in the low equilibrium.

The key paradox is that Tobin’s q in the high equilibrium is the same as in the low one (around 1.02). But the total capitalization of the industry (q × K) is 3.5 times larger, real infrastructure is enormous, ecosystem wealth is colossal, and productivity is an order of magnitude higher. The IT ecosystem is stable and wealthy. This is the hypothetical IT industry of 2030, if the transition succeeds. Every company uses AI agents for routine tasks, all data centers are packed with specialized chips, LLMs are embedded everywhere, from enterprise software to household devices. Further growth is evolutionary, without revolutions, through improvements to existing systems. Company valuations return to reasonable multiples, but against a massive asset base. The industry waits for the next qualitative breakthrough, whether true AGI, or a fundamentally new architecture based on quantum computing.

Where Are We Now?

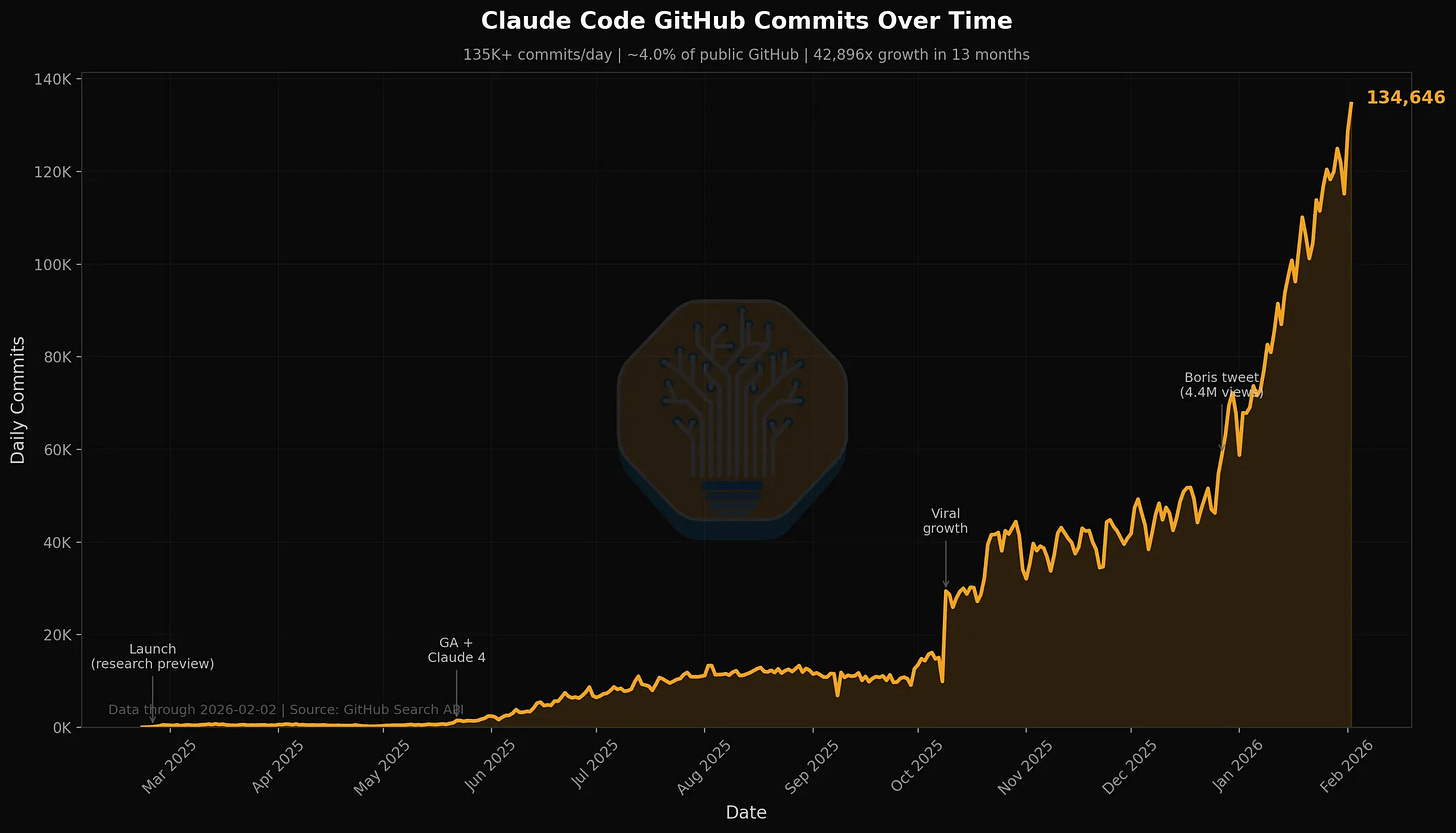

It’s quite probable that we’re currently in the critical transition phase, specifically between K_ai and K_sat. To start with, Claude Code (from Anthropic), which writes software code, currently accounts for 4% of all public commits on GitHub. This is February 2026. Analyst forecasts suggest this figure will grow to twenty percent by the end of this year. One in every five commits in the global code repository will be written not by a human but by artificial intelligence. Ryan Dahl, creator of NodeJS, says outright that “writing syntax by hand is no longer an engineer’s job.”

According to a Stack Overflow survey, eighty-four percent of developers already use AI at work. However, only thirty-one percent have moved to full coding agents. But this means the penetration curve is only just beginning and we’re witnessing the mass deployment of a technology that replaces highly-paid skilled labor.

Companies report that one developer with Claude Code now does in a week what used to take a whole team a month. A human costs $350-500 per day in fully-loaded costs, while an AI agent costs $6-7. Return on investment of ten to thirty times. With that math, you can’t stop the adoption. Now let’s return to the Caballero model and its key thresholds.

The first threshold (K_ai) is the moment when enough IT infrastructure has accumulated for mass AI deployment that begins to replace human labor. Before this threshold, AI exists but is used in a limited way. After it comes Region II, where returns on capital hold at a plateau because each new GPU cluster adds not only computing power but also virtual workers. Effective labor grows together with capital, the capital-labor ratio stays constant, and marginal returns don’t fall.

The second threshold (K_sat) is the saturation point, when AI has reached the limit of deployment under current technologies. Beyond this, growth comes only from regular infrastructure, the magic ends, and returns begin to fall again under classical laws.

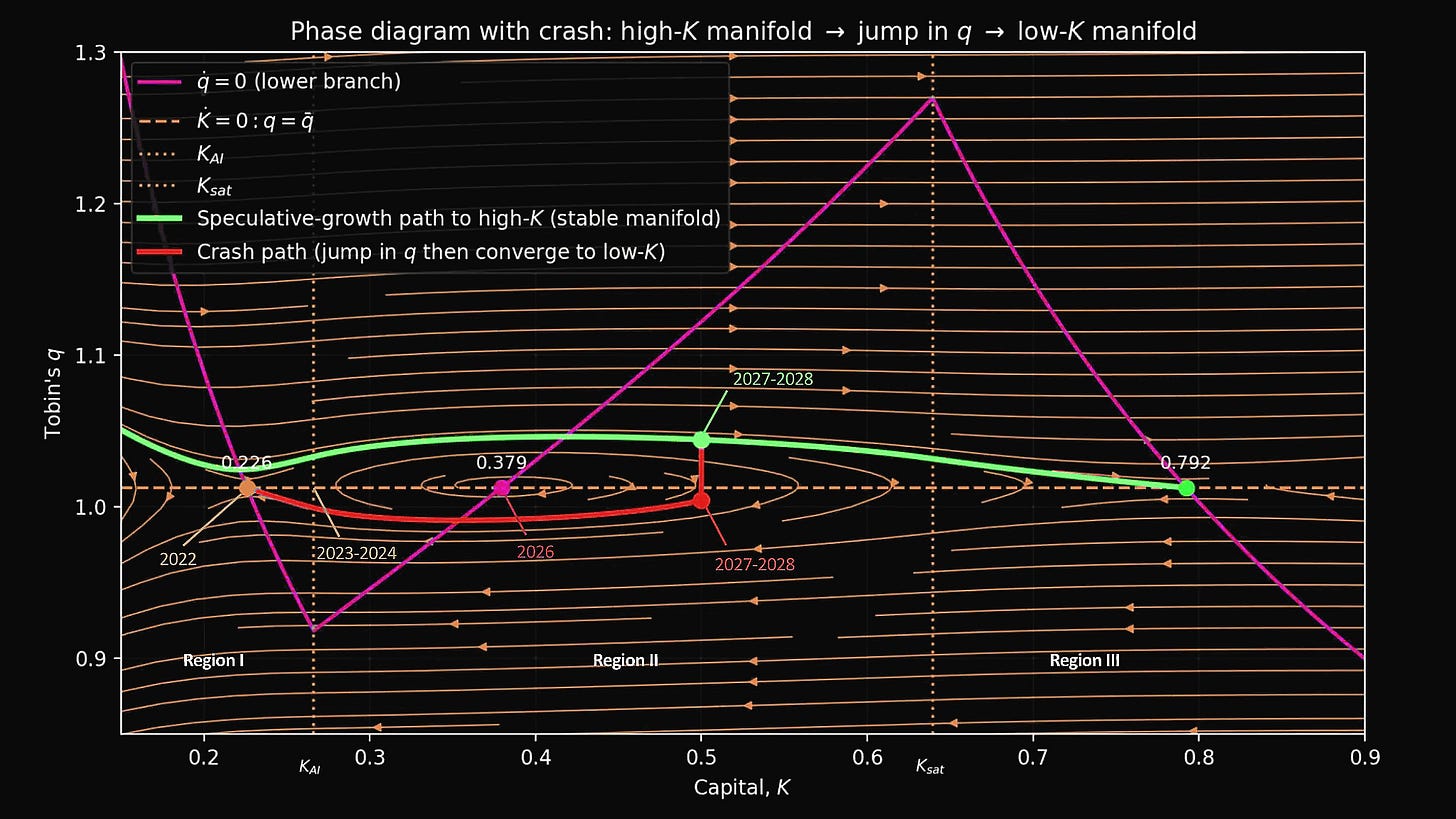

We probably already crossed the first threshold K_ai around 2024. ChatGPT launched at the end of 2022, generated an explosion of interest in 2023-2024, and 2025 became the year of mass adoption.

Now, in February 2026, we see Claude Code capturing 4% of commits and moving toward 20%. This means we’re inside Region II (the AI deployment zone), where returns are flat and rapid growth is possible without the classical constraints of diminishing returns. AI is partially displacing human labor, companies are scaling infrastructure at breakneck speed, returns are holding at a high level, and growth is limited only by the availability of computing power. Anthropic is adding more monthly revenue than OpenAI. The market is still far from saturation.

But we definitely haven’t reached the second threshold K_sat yet, since growth is not slowing but accelerating. Only 31% of developers use agents, meaning an enormous reserve for penetration growth remains untouched. The horizon of autonomous tasks that AI can perform without human involvement is doubling every four to seven months. Currently an agent can work autonomously for about five hours, but that’s far from multi-day projects like a full audit or comprehensive system refactoring.

Moreover, coding is only a beachhead, not the final destination. Ahead lie enormous numbers of knowledge workers worldwide: analysts, lawyers, financial professionals, consultants, marketers. Anyone who reads information, applies knowledge, and produces structured output is in the line of fire. Accenture has signed a contract to train 30,000 employees to work with Claude, focusing on financial services, healthcare, and the public sector. These are enormous untouched markets.

So we’re somewhere between K_ai and K_sat, at the very center of Region II, roughly a third of the way from the deployment threshold to the saturation threshold.

In that case, where does Tobin’s q sit? Again, q shows how expensively the market values companies relative to the actual replacement cost of their infrastructure. In a normal state, q is around 1.02 (fair company valuation). During speculative growth q jumps higher, and these inflated valuations are what stimulate capital expenditures. It’s profitable for companies to issue expensive shares and build data centers. But in the Caballero model the positive and negative scenario trajectories show abstract dynamics, not a calendar-calibrated path for the real economy, in order to simplify understanding of the model. This means the green and red trajectories are simply examples of two possible scenarios.

Real values differ based on the actual valuations of companies today. Currently q is at or near its peak, possibly in the range of 1.25-1.3. Many tech companies are trading at inflated multiples, Anthropic and OpenAI are raising billions at relatively modest revenue, and euphoria surrounds AI companies. These are the classic signs of inflated valuations.

But most importantly, these inflated valuations are not an irrational bubble. They’re entirely rational. A high q (or high valuation) is the fuel of the technological transition. Without inflated valuations there’s no investment boom, without a boom there’s no rapid infrastructure accumulation, without infrastructure you can’t reach high equilibrium. In other words, to travel from low K (capital, infrastructure volume) to high K, you need a period when q is significantly above normal. This isn’t an error but a necessary part of the mechanism.

Anthropic and OpenAI are scaling compute aggressively precisely because the market gives them capital at high valuations. This capital turns into real data centers, GPU clusters, and trained models. Speculative optimism is materializing into physical infrastructure, not just inflating useless assets.

What Could Cause a Collapse?

Right now K (capital) is roughly at the 0.379 point, q around 1.25-1.3. This is the most dangerous moment in the entire trajectory. From here, if investor confidence holds, q (the high valuation) should start declining while K continues growing toward the saturation threshold along the green trajectory and onward to high equilibrium. But if that confidence breaks, a crash begins and everything follows the “Crash path” scenario.

But what exactly could break the trajectory? There are several scenarios, and some could be happening simultaneously.

First: a premature technological ceiling. The scaling laws that predict model quality improvement with increases in size and data volume suddenly stop working. Models hit a ceiling, the autonomous task horizon stops expanding. Investors realize that further progress is impossible without a qualitative breakthrough on the level of AGI, and there isn’t one. Panic sets in.

Second: regulatory shock. Governments introduce strict restrictions on replacing humans with AI, transparency requirements kill commercial models, and bans in critical industries sharply narrow the market.

Third: economic crisis. A recession forces companies to cut capital expenditures, the GPU boom stops, investments freeze. In my view, a fairly likely scenario.

Fourth: competitive shock. Don’t dismiss the scenario where Microsoft loses ground to startups, OpenAI loses its lead to Anthropic, or Chinese models like DeepSeek crush Western company valuations by demonstrating that AI has become a commodity without meaningful product differentiation.

Fifth: public backlash. Mass layoffs of programmers generate political pressure, “AI took our jobs” narratives gain momentum, and public opinion turns against tech giants. Unlikely, but worth noting.

Microsoft, for instance, is already caught in a kind of trap between two businesses. On one side, Azure (their cloud platform), which rents GPU capacity to companies like OpenAI and Anthropic. That’s the future growth, the terminal value of the company. On the other, Office365, which is what generates enormous profit. But to earn revenue in Azure, Microsoft has to rent its infrastructure to competitors who are destroying its own products. Claude for Excel does what Copilot for Excel was supposed to do, but it was launched by an outside party.

To protect the Office suite, Microsoft has to invest heavily in AI, but those very investments are accelerating the destruction of the per-user-seat software model. Microsoft CEO Satya Nadella has effectively taken on the role of AI product chief, stepping back from day-to-day CEO duties, so high are the stakes. If Microsoft stumbles, it could trigger a chain reaction of investor panic. It would be fatal if the largest market participant loses to startups.

Meanwhile OpenAI is also under threat. Anthropic is outpacing them in monthly revenue growth, and Claude Code is outpacing GitHub Copilot and the ChatGPT API in adoption rates. OpenAI risks becoming just a provider of compute tokens rather than a company building full-stack solutions and AI agents. If the market leader loses, investors begin to doubt the durability of the entire ecosystem.

Any of these triggers, or a combination of them, could cause q to drop sharply. AI company stock prices collapse over days or weeks. q falls from 1.3 to, say, 1.0 or even 0.95. The industry slips off the green trajectory and lands on the red one, back toward low equilibrium.

Then capital expenditures freeze. Companies cancel GPU orders, halt data center construction. Startups don’t get funded and go bankrupt. Infrastructure stops growing and existing infrastructure begins to degrade (depreciation consuming capital faster than new capital is added).

Suppose that in 2027-2028 capital starts falling, after which the industry slowly rolls back to the left on the chart, losing all the progress of recent years. A few years later, around 2030, everything returns to the starting point: K around 0.22, low equilibrium, with q back at its fair level of 1.02. All AI infrastructure is written off as non-functional, the industry has returned to a pre-ChatGPT state. This is a self-fulfilling crash, where pessimism reduces valuations, which strangles investment, which makes the future worse.

The Alternative

In this case it’s the preservation of confidence, without serious triggers. If the coordination of expectations between investors, companies, and consumers holds long enough, the trajectory continues.

2026-2028: Claude Code becomes the standard, capturing 20%+ of commits. Accenture trains tens of thousands, financial institutions, consulting firms, and law firms begin automation. The autonomous task horizon keeps growing, from five hours to twelve hours to multi-day projects. Capital grows from 0.4 to 0.6. q starts declining from its peak of 1.3 toward 1.2, because capital accumulation and wealth growth lower the required investor return.

In this hypothetical scenario, around 2028-2029 the industry crosses the K_sat threshold. This will be the inflection point when all the obvious applications of current LLMs are exhausted. Coding is fully automated. Customer support, data entry, and basic analytics are automated. Capital invested in AI reaches its maximum and freezes. Further infrastructure growth continues only through regular servers (no new virtual employees are added and the effective total workforce stabilizes). The magic of Region II ends, and returns on capital begin to fall again under classical laws. But by this point the industry is already far ahead.

q continues declining from 1.2 toward 1.1, because inflated valuations are no longer needed, accumulated infrastructure is enormous, and required returns have fallen to historical lows.

By 2029-2030+, capital (K) slowly grows from 0.64 toward 0.79. Growth slows because returns are falling. Investment continues, but it’s no longer a boom, just steady accumulation. The wages of remaining workers (those AI didn’t replace) will start growing because capital deepens and productivity rises, though a significant share of the population may remain unemployed. Labor’s share of revenue stabilizes at a new, lower level. q continues falling from 1.1 toward 1.02. By end of 2030 the industry reaches capital of around 0.79, q returns to fair value, and high equilibrium is achieved.

In this scenario IT infrastructure will be enormous. AI is fully integrated into all processes, every company uses LLM agents for routine work. Programmers, analysts, and designers work at the level of architectural decisions and strategy, with details delegated to AI. The share of wages in tech company revenue is lower, but productivity is an order of magnitude higher. Company valuations return to reasonable multiples, but total industry capitalization will be colossal because the asset base has grown many times over. The industry waits for the next qualitative breakthrough, whether true AGI, quantum computers, or a fundamentally new architecture. That scenario sounds like a fairy tale, nothing less.

Conclusion

Don’t forget: Region II, where we currently are, is the most dangerous moment of the entire transition. The next year or two could decide everything. Either we’ll pass the peak and begin the descent toward high equilibrium, materializing speculative optimism into a real economic transformation. Or confidence will break, valuations will collapse, and we’ll roll back down, writing off the AI boom as yet another bubble.

The Caballero model shows that both of these outcomes are rational, both are possible, and the choice between them depends not on fundamental factors that can be measured today, but on the collective belief of market participants that the transition will complete successfully. This is a coordination game where everyone wins if everyone keeps believing, and everyone loses if someone doubts first. That’s why all of this is considered rational but fragile.

Hold on tighter to the bar: the wind is picking up.